Unit tests for sql scripts with dependencies in Dataform

Do you unit test your data warehouse scripts?

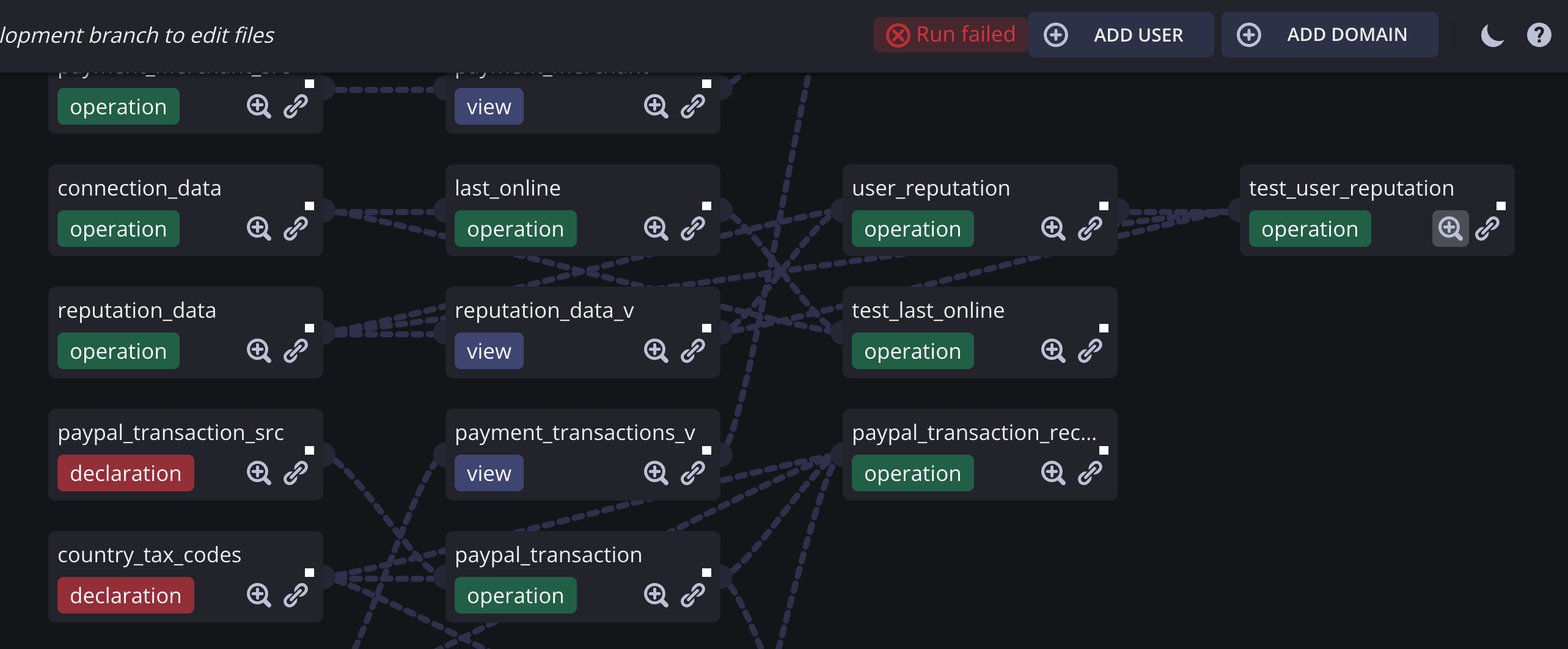

Dataform is a great free tool for SQL data transformation. It helps to keep your data warehouse clean and well-organized. It has nice dependency graphs explaining data lineage and it serves as a single source of truth for everything that happens there.

I am going to talk about unit tests though.

What makes a good unit test?

- It should test expected vs actual reaults

- Should describe the script's logic corresponding to use cases.

- It should be automated.

- Be Independent (tests should not do setup or teardown for one another)

- It should be easy to implement.

- Be Repeatable: Anyone should be able to run it in any environment.

- Once it's written, it should remain for future use.

Dataform supports SQL unit tests for views and you can read about it in Dataform docs [1]. It is indeed simple.

- Let's imagine we have a table:

- Consider this view and imagine you need it always to return three columns which means you would want to unit test it:

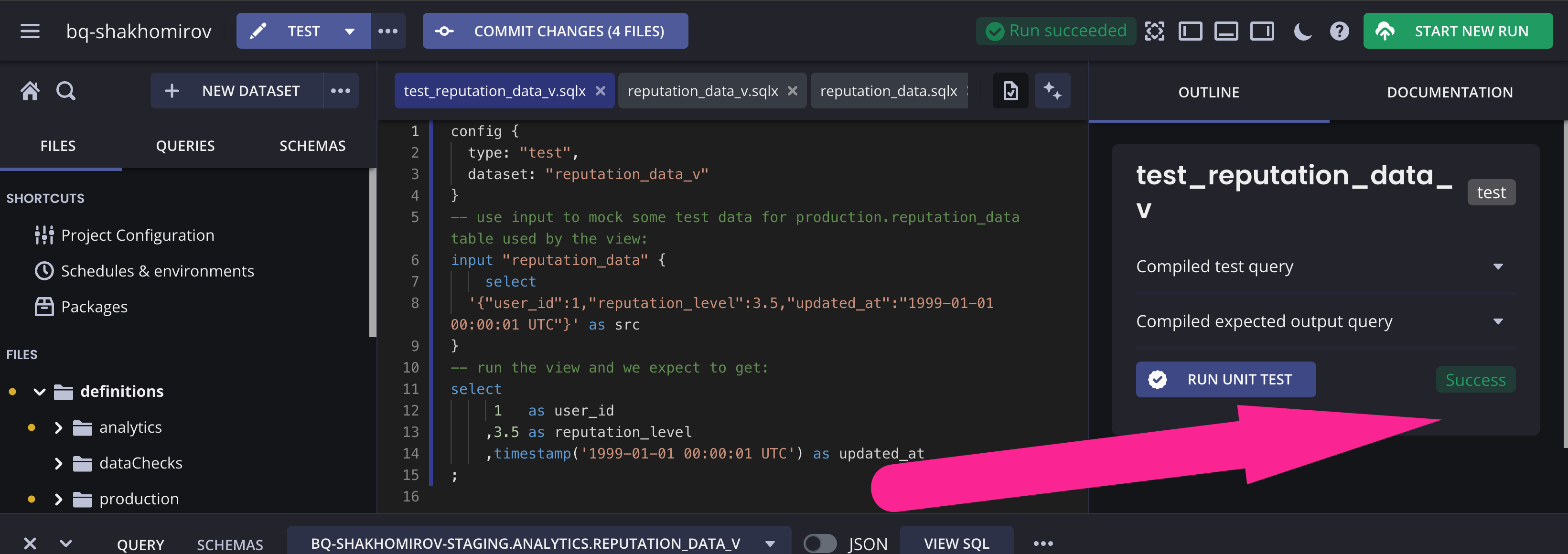

In Dataform unit testing the view is simple:

However, often it is not enough.

You might want to run and test custom SQL operation / a script having multiple statements and more than one output.

Indeed, it becomes tricky when you want to unit test a SQL script which depends on some other actions, i.e. views, scripts, tables, etc. In this case you would want to keep it atomic and run one test for all of them each time you run it again.

Let's imagine we perform incremental updates on our table every day/hour and you would want to unit test that:

How do we create a unit test for that?

- I will use Dataform to create inputs in

*_testsschemas of each dependency. Depending on each table's schema it will create an input, i.e.production_tests.reputation_dataforproduction.reputation_data, etc. - I will run the main operation we are going to test which is

user_reputationand save the actual output in*_testsschema, i.e. inproduction_tests.user_reputation. - I will compare my expected output against that actual output I got earlier.

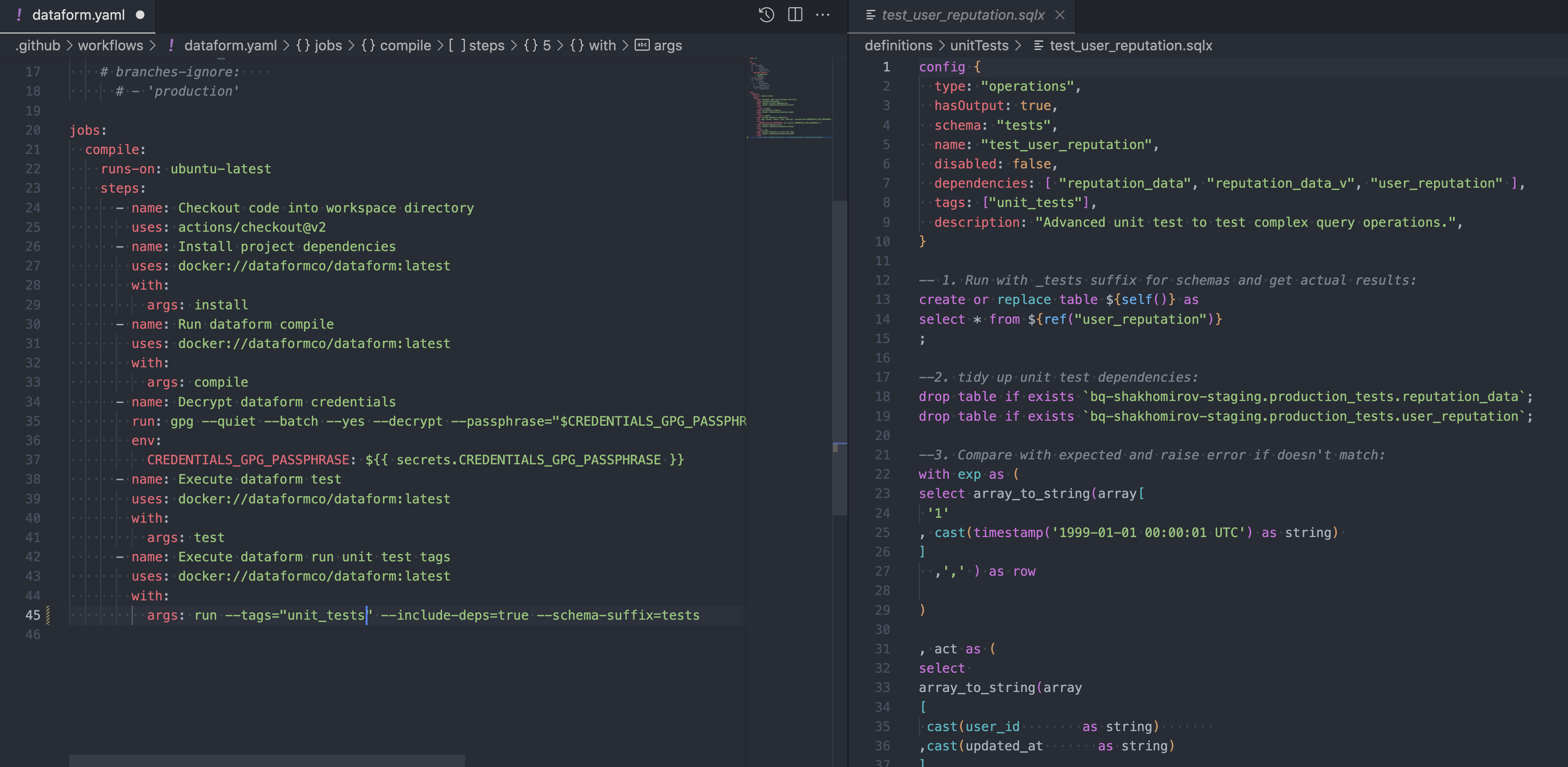

Let's write the unit test for user_reputation script:

A unit test fails if the actual output from the dataset is not equal to the expected output.

It means that:

- the number of output rows must match

- the number of output columns and their names must match

- the contents of each row must match

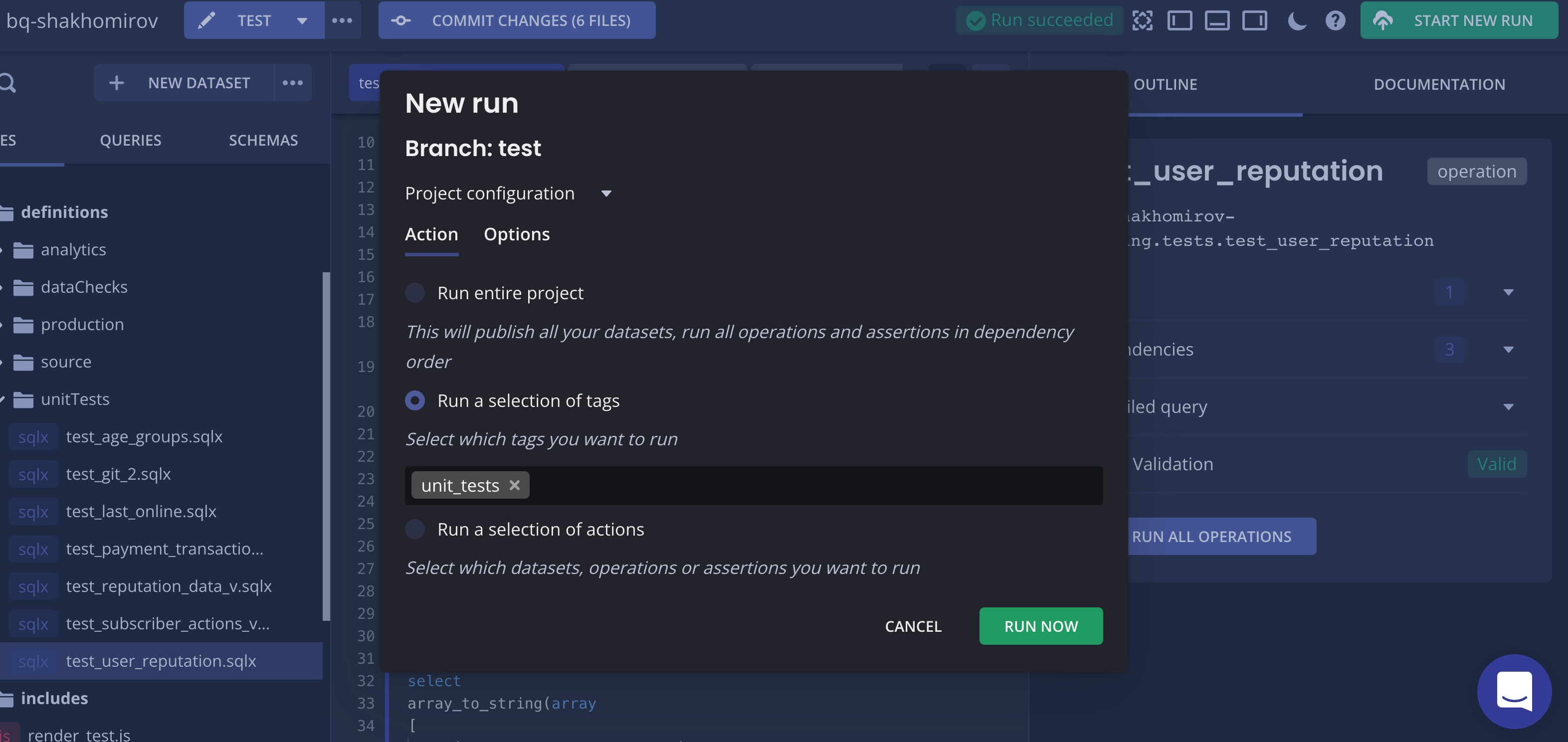

Let's run it:

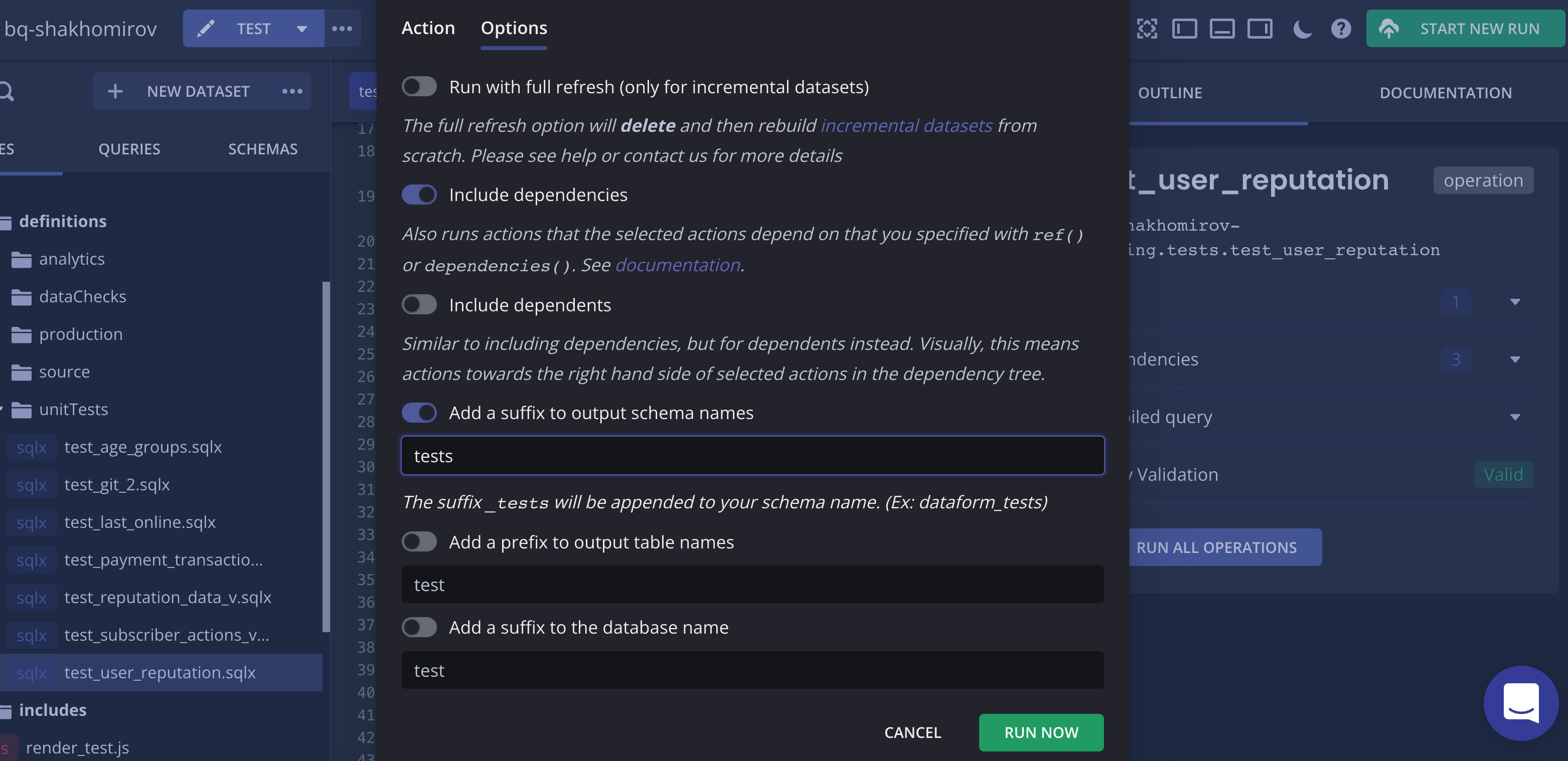

- Run actions with tag

unit_tests. Make sure your scripts have it. - Include dependencies.

- Add schema suffix (

*_tests.any_table).

...

...

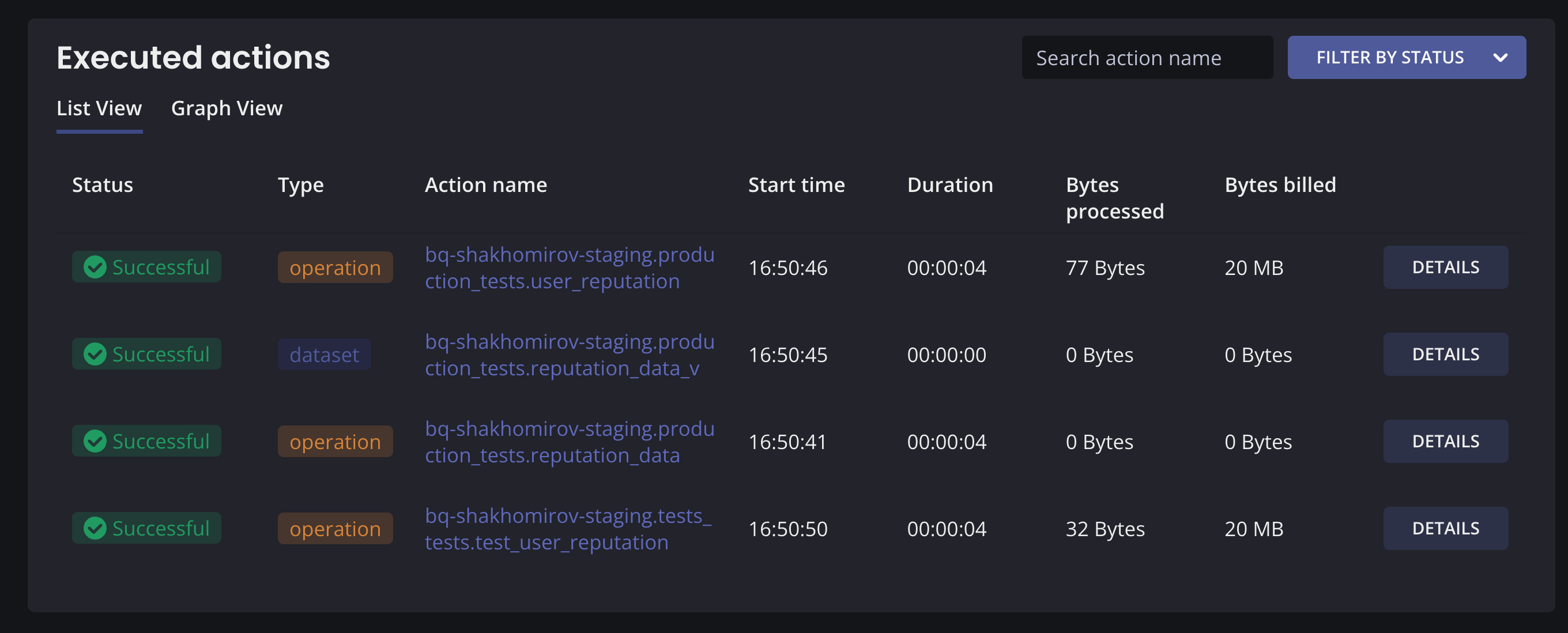

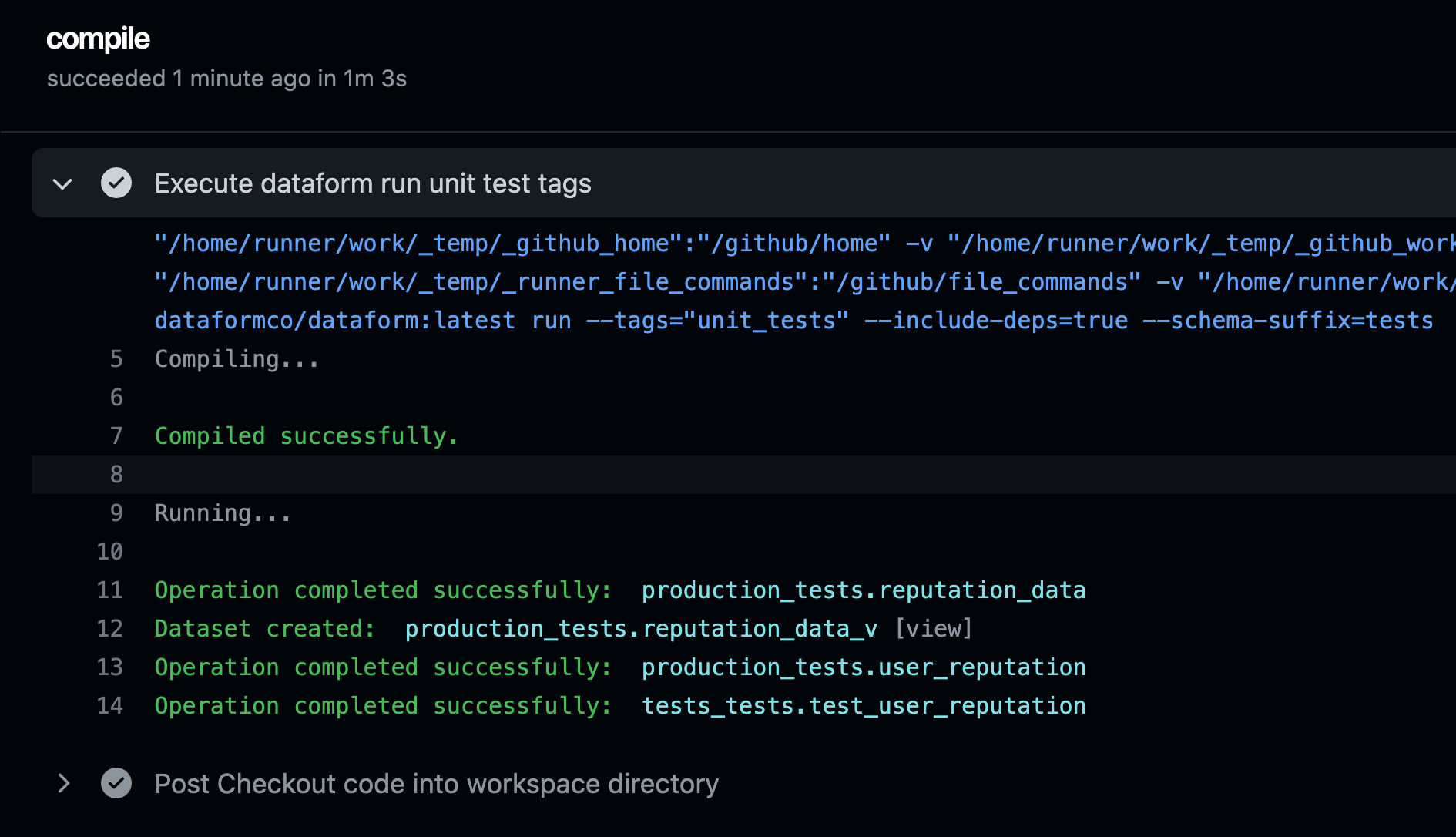

As a result we will see a Pass for our unit test:

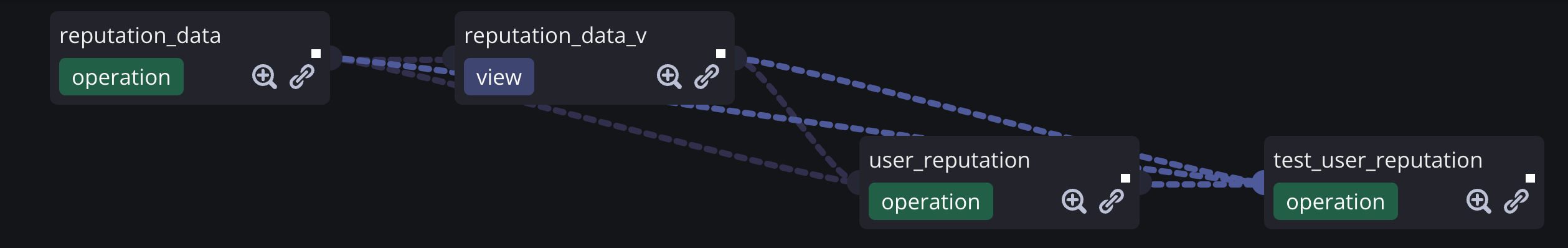

So what has just happened?

Dataform ran all the dependencies but created "fake" outputs in my new

Dataform ran all the dependencies but created "fake" outputs in my new production_tests.* schema so the final script (a unit test script) could use them as inputs.

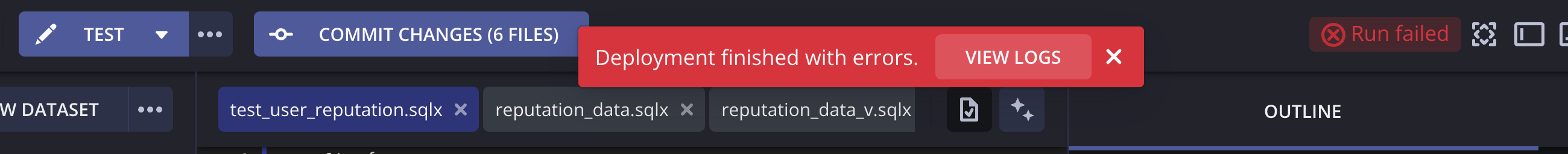

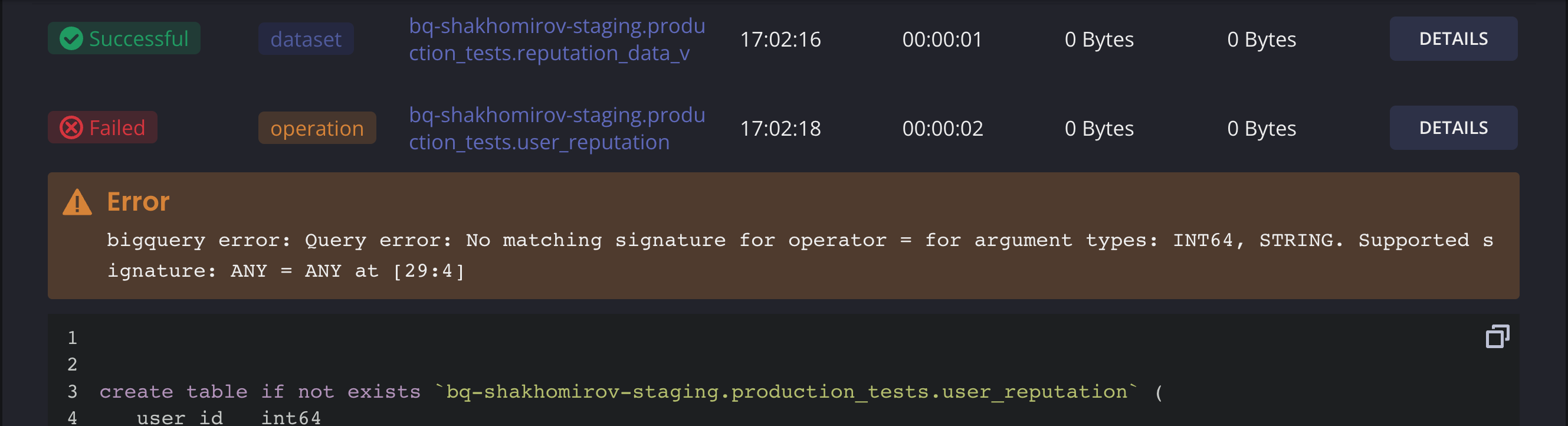

Let's see what happens if someone decides to change the logic of any of these dependencies in that pipeline. I will change the reputation_data_v slightly:

I will run the unit test (test_user_reputation.sqlx) again:

So this is how we run unit tests for SQL scripts in Dataform:

- Run actions with tag

unit_tests. Make sure your scripts has it. - Include dependencies.

- Add schema suffix (

*_tests.any_table).

Dataform has a command line interface so you can run your project's actions from the command line:

Read more about it here: 2

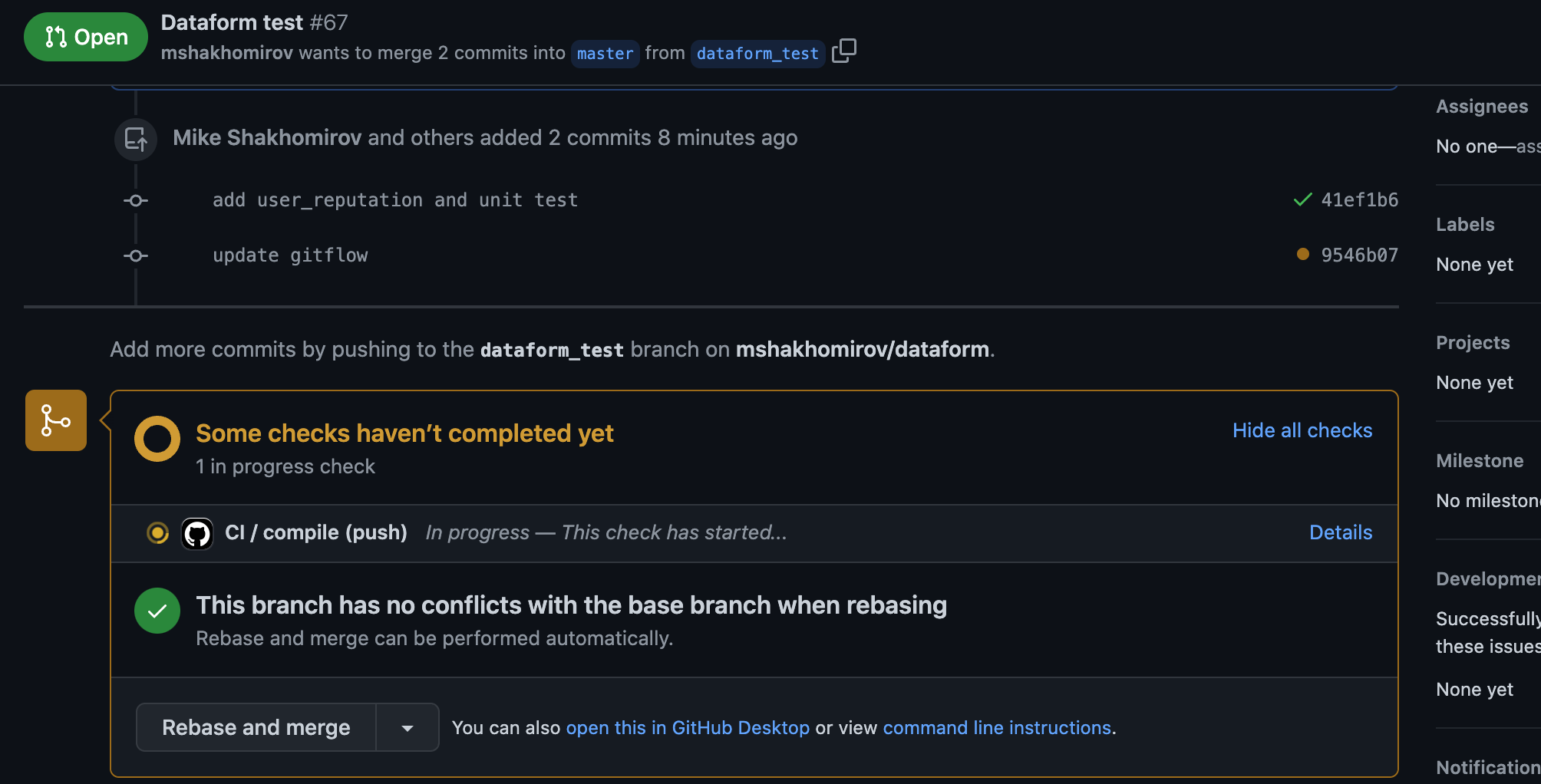

Dataform also has a docker image so you might want to setup a Gitflow pipeline to run unit_tests actions each time you create a Pull Request:

So when you push changes to your repository it will run the checks for your pipelines to see if any logic has been affected:

Conclusion

Dataform is a great tool for data modeling. It's like DBT but written in JavaScript. It has Git and CI/CD features which makes it extremely powerful. It documents SQL script automatically and creates nice dependency graphs which makes it useful as a single source of truth for everyone who is going to use it.

At the moment new Dataform signups are closed as the company was quietly acquired by Google. Dataform is now available in Preview mode in Google Cloud Platform which means that it will become available with a full list of features in a couple of months. I've already tried a couple of things in Preview but still prefer to use the legacy Web UI. If I want to run it as a microservice Dataform has a Docker image which allows doing that.

I couldn't find Dependency graphs and some other important features in Preview version in GCP.

Recommended read:

- 1. https://docs.dataform.co/guides/tests

- 2. https://docs.dataform.co/dataform-cli

- 3. https://docs.dataform.co/guides/ci-cd

Comments

Jen Lopez November 27, 2022

Nice.